Abstract

The 2026 National Defense Authorization Act directs the Department of War to define cognitive warfare and distinguish it from existing information-related activities. This article responds directly to that mandate, proposing a decision-centric definition of cognitive warfare, delineating it from information operations, psychological operations, and influence activities across seven dimensions. The definition is illustrated through a notional case study and validated against observed Russian and Chinese cognitive warfare operations in the Baltic states and Taiwan.

Disclaimer: “The views expressed are those of the authors and do not reflect the official policy or position of the US Air Force, Department of War, or the US Government.”

The NDAA Mandate: Clarifying Cognitive Warfare and Narrative Intelligence

The 2026 National Defense Authorization Act (NDAA) directs the Secretary of War to provide a report defining cognitive warfare and narrative intelligence, clarifying how these concepts relate to existing doctrinal elements including information warfare, psychological operations (PSYOPs), and military information support operations (MISO) (see Senate Armed Services Committee Report 119-39, “Narrative Intelligence and Cognitive Warfare”). The committee specifically notes persistent definitional ambiguity and warns that conflation among information warfare, cyberwarfare, influence operations, and cognitive warfare contributes to a lack of strategic clarity.

This directive is not semantic housekeeping. It reflects congressional concern that peer competitors are systematically investing in the cognitive domain, integrating military and civilian instruments to achieve advantage below the threshold of armed conflict. If the Department of War (DoW) responds coherently, cognitive warfare must be defined precisely, distinguished from adjacent Operations in the Information Environment (OIE) concepts, and tied to operational evaluative criteria. This article responds directly to that mandate.

The Problem: Conflation Across the Information Environment

DoW and Air Force doctrine the information environment as consisting of physical, informational, and cognitive dimensions. However, operational practice of cognitive warfare tends to focus on the informational dimension: platforms, messages, dissemination, and reach.

This mismatch has produced three recurring conceptual problems. (1) Message-Centric Framing: Cognitive warfare is treated as persuasion or messaging rather than as decision disruption; (2) Unidirectional Modeling: Influence activities are analyzed as actor-to-audience flows, underemphasizing attacker/defender adaptations and contested interactions; and (3) Engagement-Based Metrics: Success is evaluated through exposure, reach, or sentiment rather than decision performance. The NDAA mandate requires resolving this ambiguity.

Review of a Systematic Definitional Framework of Cognitive Warfare

Very recently, a systematic definitional framework of cognitive warfare was introduced in “Cognitive Warfare: Definition, Framework, and Case Study” (Rushing, Hersch, & Xu 2026), which is briefly summarized as follows. The paper begins by identifying three attribute families for characterizing cognitive warfare: objectives (the cognitive effects an actor seeks to produce or prevent), capabilities (the mechanisms available to achieve or deny those effects under contest), and cost and efficiency (the resources required to sustain advantage over time). These families apply symmetrically to both attackers and defenders, reflecting the paper’s central argument that cognitive warfare is an interactive contest rather than a unidirectional influence process.

To distinguish cognitive warfare from related activities, the authors observe that all military operations ultimately depend on decision cycles, especially the Observe, Orient, Decide, and Act—the OODA loop originally articulated by Col. John Boyd. Cognitive warfare targets this cycle directly. Correspondingly, the paper presents formal definitions of four core concepts:

Cognitive attacks: Operations that target human minds, aiming to manipulate perceptions and beliefs in one or multiple time horizons, which may be weaponized and enhanced through technology and deceptive information, typically to affect the decision-making and actions of individuals or broader populations to gain advantage (adapted from Rushing & Xu, 2026).

Cognitive defenses: Operations and capacities that protect human minds from adversarial efforts to manipulate perceptions and beliefs, preserving or restoring sound decision-making and action in pursuit of sustained advantage in one or multiple time horizons.

Cognitive warfare: A sustained and adaptive contest over human decision-making in which adversaries seek relative advantage by shaping or disrupting perception, interpretation, judgment, and action over time horizons.

Cognitive superiority: a condition in which one actor maintains a sustained comparative advantage in the cognitive domain as manifested by the cognitive capabilities to achieve its cognitive objectives across defined time horizons by preserving or degrading decision performance within the OODA cycle, at will and higher speed, with much higher cost-effectiveness than its opponent.

Note that three elements are essential to these definitions: (1) Decision-Centric. The primary target is human cognition, not merely information systems or public opinion. (2) Interactive and Adaptive. Cognitive warfare is a contest, not a broadcast- (3) Multi-Horizon. Effects occur across Acute (short-term) disruption and Chronic (long-term) conditioning. Cognitive warfare is therefore distinguished not by tools, but by target and evaluative criteria.

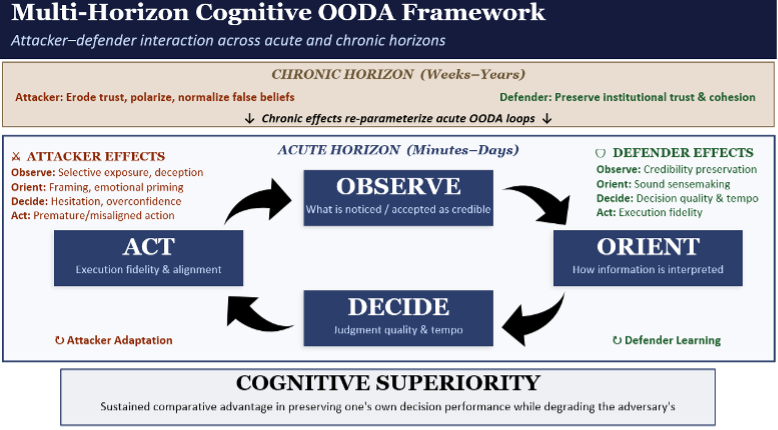

The paper operationalizes these definitions through the Multi-Horizon Cognitive OODA Framework, which distinguishes (short-horizon) effects that manifest within minutes to days as immediate OODA disruption from Chronic (long-horizon) effects that accumulate over weeks to years, gradually shifting trust calibration, belief alignment, and interpretive priors that shape subsequent decision cycles. Attacker and defender effects are mapped symmetrically to each stage of the OODA loop with feedback loops reflecting the adaptive nature of both sides.

To demonstrate the framework’s analytic utility, the paper presents a notional case study set in the fictional partner nation of “Norland,” where a near-peer adversary seeks to derail a logistics agreement through cognitive rather than kinetic action. The case study specifies attacker and defender objectives, capabilities, and cost structures; and evaluates cognitive superiority using decision-centric indicators including decision latency, orientation divergence, and action misalignment. The analysis concludes that the attacker achieves cognitive superiority not through control of belief but through comparative performance advantages in adaptation speed, amplification velocity, and asymmetric cost imposition.

Response to the NDAA Mandate

The present article leverages, but goes beyond, the OODA loop framework by exactly addressing the NDAA mandate with a policy and practitioner audience, translating its core concepts into the NDAA compliance argument and adversary analysis that follow. We refer readers interested in the formal attribute structure, the complete OODA-stage effects mapping, or the structured case methodology to the authors’ foundational Cognitive Warfare: Definition, Framework, and Case Study paper.

Distinguishing Cognitive Warfare from Related Concepts

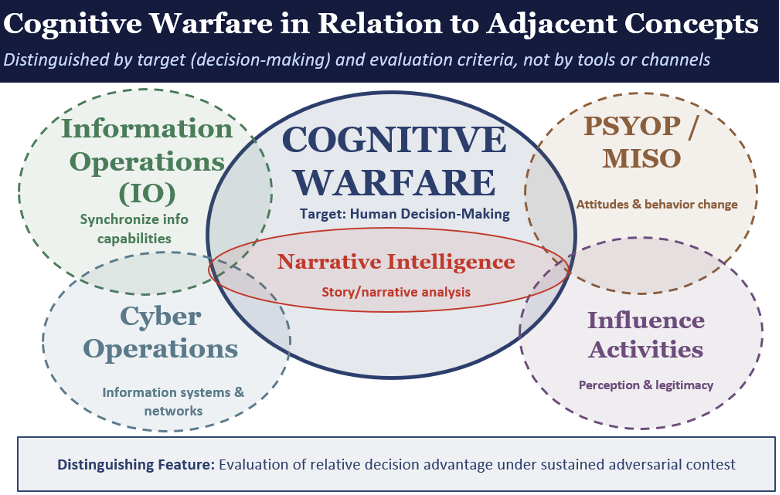

The NDAA requires assessment of how cognitive warfare relates to existing doctrinal elements and requires explicit delineation of cognitive warfare from related concepts. Rushing, Hersch & Xu (2026) develop this delineation formally, comparing cognitive warfare against information operations, PSYOPs, and influence activities across six dimensions including: primary target, central objective, interaction model, time horizon, level of analysis, and evaluation criteria. The Venn Diagram below illustrates how cognitive warfare intersects with, but is not reducible to, each of these four adjacent concepts, followed by an analysis on how each relates to cognitive warfare.

Figure 1. Cognitive Warfare in Relation to Adjacent Concepts. Cognitive warfare intersects with information operations, psychological operations, influence activities, and cyber operations but is not reducible to any of them. It is distinguished by its target, human decision-making, and its evaluative criteria, not by tools or delivery channels.

Cognitive Warfare: The Center. Cognitive warfare occupies the center of Figure 1 because it is defined by a target and evaluative criterion that none of the adjacent concepts share as their primary focus: human decision-making. Where the surrounding concepts are typically organized around tools, audiences, or coordination functions, cognitive warfare is organized around OODA loop performance: whether actors can observe accurately, orient coherently, decide confidently, and act effectively under sustained adversarial pressure.

Information Operations (IO). As defined in current joint doctrine, IO integrates information-related capabilities across the information environment to support mission objectives. Its primary function is coordination and synchronization—ensuring that cyber, electronic warfare, MISO, public affairs, and other capabilities work in concert. IO overlaps with cognitive warfare where synchronized information activities produce cognitive effects on adversary decision-making. But IO is not defined by decision degradation as its central objective, nor does it model the adaptive attacker–defender interaction that characterizes cognitive warfare. An IO campaign can succeed on its own coordination-centric terms—capabilities synchronized, messages delivered—without ever measuring whether adversary decision quality was actually degraded. The overlap in Figure 1 reflects that IO provides delivery mechanisms and coordination architectures that cognitive warfare may employ, but the evaluative lens differs fundamentally.

Psychological Operations and Military Information Support Operations (PSYOP/MISO). PSYOP and MISO seek attitudinal and behavioral change in target audiences through targeted messaging and persuasion. The overlap with cognitive warfare is substantial: both concern the human mind, and PSYOP products may produce cognitive effects that degrade adversary decision-making. However, PSYOP is typically modeled as a unidirectional activity—an actor crafting and delivering messages to an audience—and evaluated through attitudinal or behavioral change indicators. Cognitive warfare, by contrast, models a dynamic and adaptive contest in which both attacker and defender continuously adjust. A PSYOP campaign may shift attitudes without degrading decision performance; conversely, cognitive warfare may degrade decisions without changing attitudes at all. For example, by inducing hesitation, flooding the information environment, or fracturing orientation among staff rather than changing what anyone believes, friction is introduced.

Cyber Operations. Cyber operations target information systems and networks, the technical infrastructure through which information flows. The overlap with cognitive warfare arises because cyber capabilities can serve as enablers of cognitive effect: compromised platforms can be used to amplify deceptive narratives, manipulate what information reaches decision-makers, or undermine confidence in digital systems. But cyber operations are defined by their target domain (networks and systems) rather than by cognitive outcomes. A successful cyber operation may degrade a network without producing any cognitive effect on human decision-making; a successful cognitive attack may degrade decisions without touching a single network. The overlap in Figure 1 is real but bounded: cyber provides access and delivery mechanisms, while cognitive warfare defines success by what happens in the human decision cycle.

Influence Activities. Influence activities encompass a broad range of efforts to shape perception, legitimacy, and political will—including strategic communication, public diplomacy, public affairs, and deterrence signaling. Their overlap with cognitive warfare is wide: influence activities frequently seek to alter how audiences perceive events, which can affect downstream decisions. But influence activities are often evaluated by reach, engagement, and sentiment indicators rather than by decision-centric outcomes. They also tend toward unidirectional models—actor shaping audience perception—rather than the adaptive attacker–defender contest that defines cognitive warfare. Strategic communication and public affairs, for example, aim to inform domestic and international audiences and maintain institutional credibility. These functions may indirectly support cognitive defense by preserving trust in authoritative channels, a critical element of the chronic horizon, but they are not designed to assess or counter adversary decision-degradation campaigns. The boundary in Figure 1 reflects that influence activities may contribute to cognitive effects but are not inherently structured as decision-degradation campaigns evaluated through OODA performance.

Narrative Intelligence: Nested Within. Narrative intelligence is depicted as nested within cognitive warfare because it serves as an analytic function that directly enables cognitive warfare assessment. The NDAA defines narrative intelligence as intelligence concerning the story or narrative an adversary is attempting to build. It is not equivalent to counter-messaging. Rather, it shifts analysis from tracking what adversaries are saying to understanding what decisions they are trying to degrade. Narrative intelligence contributes to cognitive warfare in three ways. First, it enables early detection of chronic conditioning by identifying long-horizon efforts to erode trust, polarize identity, or invert credibility. Second, it supports anticipation of acute exploitation by analyzing how adversaries may leverage crises to impose cognitive friction. Third, it improves decision resilience. By understanding adversary narrative objectives, commanders can preserve attribution discipline, reduce reactionary overreach, and maintain coherent orientation. Narrative intelligence also serves information operations and irregular warfare more broadly, but its decision-vulnerability focus makes it most naturally situated within the cognitive warfare frame.

The distinguishing feature across all five relationships is stated at the bottom of Figure 1. Cognitive warfare is evaluated by relative decision advantage under sustained adversarial contest, and the same applies to cyber warfare in the cyber domain. The cyber domain overlaps with, but is different from, the cognitive domain. Thus, each adjacent concept may contribute to cognitive effects, but none centers decision performance as its defining criterion. This is the boundary the NDAA mandate requires the DoW to draw.

Cognitive Superiority and the NDAA Evaluative Standard

If air superiority means freedom of action without prohibitive interference, cognitive superiority is the analogous condition in the cognitive domain. That is, superiority does not require control of belief. It requires relative decision advantage under sustained contest. As Figure 2 illustrates, this advantage is structured across two temporal horizons. Chronic conditioning re-parameterizes how targets observe, orient, decide, and act before a crisis begins. Acute disruption then exploits those degraded conditions in real time: shaping perception, biasing interpretation, inducing hesitation, and eliciting misaligned action. Defenders maintain cognitive superiority by protecting each corresponding phase of the OODA loop through adaptation and learning.

Figure 2. Multi-Horizon Cognitive OODA Framework. Cognitive warfare operates across two temporal horizons. Chronic conditioning (weeks to years) erodes institutional trust, polarizes identity, and normalizes false beliefs, re-parameterizing how actors observe, orient, decide, and act. Acute disruption (minutes to days) exploits these conditions to degrade decision performance in real time. Attacker and defender effects map to each phase of the OODA loop, and cognitive superiority is achieved through sustained relative advantage in decision performance.

This is the evaluative standard that distinguishes cognitive warfare from adjacent concepts. Where information operations seek synchronization and psychological operations seek persuasion, cognitive warfare seeks relative decision advantage. When a contested information event is framed through this lens, the focus shifts to decision latency, attribution discipline, verification tempo, organizational clearance speed, and recovery time. The operational center of gravity becomes decision resilience.

To satisfy the NDAA directive, a definition of cognitive warfare must clearly distinguish it from IO, PSYOP, and influence; identify decision degradation as the central outcome; recognize its adaptive attacker–defender character; incorporate both acute and chronic horizons; and provide evaluative criteria beyond engagement metrics. The definition and framework proposed here satisfy each of these requirements.

Applying Their Framework to Understand Cognitive Warfare in the Field

Russia’s campaign in the Baltic states exemplifies the Chronic-to-Acute structure. Over years, Russian-linked operations have targeted Russian-speaking minorities in Estonia, Latvia, and Lithuania to erode trust in democratic institutions, polarize ethnic identity, and elevate pro-Kremlin media at the expense of authoritative sources, classic chronic conditioning that re-parameterizes how populations observe and orient. When Acute opportunities arise, such as elections or NATO exercises, this pre-conditioned environment is exploited through coordinated narrative surges: troll accounts spread disinformation questioning the integrity of electronic voting, deepfakes amplify fear, and information flooding overwhelms institutional response capacity. The result is not persuasion in the PSYOP sense but decision degradation: delayed government responses, divergent public interpretations, and eroded confidence in collective action. Russia’s Operation Doppelgänger, a network of cloned news sites impersonating reputable outlets across multiple EU languages, demonstrates the industrial scale at which chronic credibility inversion now operates.

China’s cognitive warfare against Taiwan follows a parallel logic. Beijing’s United Front Work Department coordinates long-horizon influence operations that exploit Chronic degradation by co-opting media elites and promoting narratives designed to weaken Taiwanese resolve and the US-Taiwan relationship. Taiwan’s National Security Bureau reported over 45,000 fake online accounts detected in 2025, up from 28,216 the prior year, with over 2.3 million pieces of disinformation recorded. During acute windows such as the 2024 presidential election, these chronic investments were activated: deepfake videos of candidates circulated on TikTok, generative AI reduced the manpower needed to scale coordinated inauthentic behavior, and the dominant narrative — that the United States would abandon Taiwan in a crisis — was amplified to degrade public confidence in alliance commitments. The Pentagon’s 2025 report to Congress confirmed that Beijing considers cognitive domain operations a key component of its pressure campaign against Taiwan, combining military exercises with proxy accounts to exaggerate PLA capabilities and spread disinformation about allied willingness to intervene.

In both cases, the adversary’s objective is not to “win an argument” but to degrade decision performance, precisely the criterion that distinguishes cognitive warfare from information operations or influence activities under the framework proposed here.

Organizational Considerations

Cognitive warfare is inherently joint and cross-functional. No single organization owns cognitive advantage. Instead, it emerges from the combined efforts of intelligence, operations, cyber, and communication functions.

This creates a coordination problem. Each element contributes, but no entity is responsible for evaluating overall cognitive performance.

Cognitive warfare therefore requires integration under a unified, decision-centric framework. The NDAA report should designate a lead integrator for cognitive warfare assessment—not to centralize capabilities, but to ensure consistent evaluation across contributing functions.

Without clear ownership, cognitive warfare risks remaining everyone’s concern and no one’s responsibility.

Implications for the Joint Force

If cognitive warfare is to be treated as a distinct operational concern, the Joint Force must adapt in four areas. Operational design must institutionalize decision-centric planning, incorporating cognitive objectives and defensive measures that target OODA integrity. Metrics must shift from reach, impressions, and sentiment toward time-to-decision, decision reversals, divergent interpretations, and recovery time. Commanders must reduce acute vulnerability through pre-authorized messaging lanes and streamlined coordination that compress response latency. And training must inject cognitive stress—ambiguity, narrative surges, and attribution friction—not merely kinetic scenarios.

Conclusion

The NDAA mandate reflects an urgent strategic reality: cognitive warfare is already underway, but its definition and application remains unsettled. Cognitive warfare is not synonymous with information operations, psychological operations, or narrative competition. It is a sustained and adaptive contest over human decision-making. Russia’s chronic conditioning in the Baltic states and China’s cognitive campaign against Taiwan demonstrate that this contest is already shaping allied decision environments. Without a shared definition and evaluative framework, the Joint Force will continue to measure the wrong things and respond too slowly. In future conflicts, especially small wars and stability operations, the actor that preserves decision tempo and coherence under contested information conditions will possess a decisive advantage. Definition is not semantic housekeeping. It is the foundation of doctrine, capability development, and operational success.